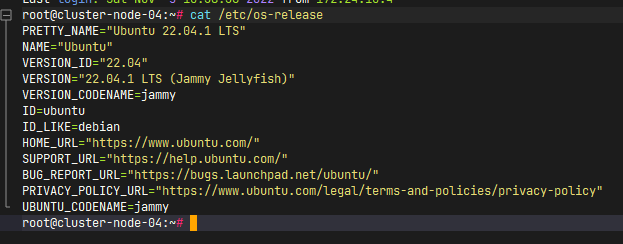

机器配置-系统版本:

设置hosts、同步系统时间、关闭selinux、firewall、关闭swap、开启ipv4转发 设置hosts (所有机器) 本次集群规模为3master 和4node , 外加一个harbor 存储镜像 信息如下:

1 2 3 4 5 6 7 8 9 172.24.10.105 cluster-master-01 172.24.10.106 cluster-master-02 172.24.10.107 cluster-master-03 172.24.10.108 cluster-node-01 172.24.10.109 cluster-node-02 172.24.10.110 cluster-node-03 172.24.10.111 cluster-node-04 172.24.10.102 harbor.xiaobai1202.com

关闭swap、selinux、firewall (所有机器) 1 2 3 4 # 临时关闭 root@cluster:~# swapoff -a # 永久关闭 注释掉swap的挂载 root@cluster:~# vi /etc/fstab

1 2 3 4 5 # 若存在配置文件 sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config # 若不存在 则直接新建一个: echo 'SELINUX=disabled' >> /etc/selinux/config

1 2 3 ufw disable service ufw stop systemctl disable ufw

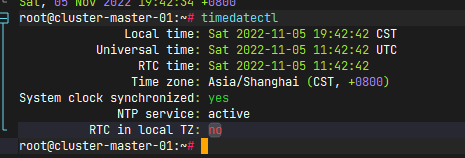

同步系统时间 (所有机器) 首先,先修正系统时区 UTC+8

1 2 3 4 5 6 7 8 # 首先查看支持的时区 timedatectl list-timezones # 下面这句有输出的话就是支持北京时间的 timedatectl list-timezones | grep 'Asia/Shanghai' # 设置时区为北京时间 timedatectl set-timezone Asia/Shanghai # 查看时区 timedatectl

输出如下为正常:

然后使用时间服务器进行同步

1 2 3 4 5 6 7 8 9 10 # 关闭默认同步 timedatectl set-ntp false # 安装ntpdate apt install ntpdate # 同步时间 /usr/sbin/ntpdate ntp.aliyun.co # 配置每天定时同步 crontab -e # 添加如下行 #

开启转发 (所有机器) 1 2 3 4 5 6 7 8 9 10 11 12 # 第一步 modprobe overlay modprobe br_netfilter lsmod | grep br_netfilter # 第二步 写入配置 cat > /etc/sysctl.d/k8s.conf <<EOF net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 EOF # 第三步 刷新配置 sysctl -p /etc/sysctl.d/k8s.conf

安装容器运行时 containerd (所有机器) 安装参考 https://github.com/containerd/containerd/blob/main/docs/getting-started.md

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 wget https://github.com/containerd/containerd/releases/download/v1.6.9/containerd-1.6.9-linux-amd64.tar.gz tar Cxzvf /usr/local containerd-1.6.2-linux-amd64.tar.gz cat > /usr/lib/systemd/system/containerd.service <<EOF # Copyright The containerd Authors. # # you may not use this file except in compliance with the License. # You may obtain a copy of the License at # # # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. [Unit] Description=containerd container runtime Documentation=https://containerd.io After=network.target local-fs.target [Service] # uncomment to enable the experimental sbservice (sandboxed) version of containerd/cri integration # Environment="ENABLE_CRI_SANDBOXES=sandboxed" ExecStartPre=-/sbin/modprobe overlay ExecStart=/usr/local/bin/containerd Type=notify Delegate=yes KillMode=process Restart=always RestartSec=5 # Having non-zero Limit*s causes performance problems due to accounting overhead # in the kernel. We recommend using cgroups to do container-local accounting.LimitNPROC=infinity LimitCORE=infinity LimitNOFILE=infinity # Comment TasksMax if your systemd version does not supports it. # Only systemd 226 and above support this version. TasksMax=infinity OOMScoreAdjust=-999 EOF systemctl daemon-reload systemctl enable --now containerd service containerd status wget https://github.com/opencontainers/runc/releases/download/v1.1.4/runc.amd64 install -m 755 runc.amd64 /usr/local/sbin/runc mkdir -p /opt/cni/bin wget https://github.com/containernetworking/plugins/releases/download/v1.1.1/cni-plugins-linux-amd64-v1.1.1.tgz tar Cxzvf /opt/cni/bin cni-plugins-linux-amd64-v1.1.1.tgz

配置containerd 使用 systems cgroup (所有机器) 首先 生成默认配置:

1 2 sudo mkdir -p /etc/containerd/ containerd config default | sudo tee /etc/containerd/config.toml

然后替换其中的cgroup驱动为systemd

1 2 3 4 5 6 sed -i 's/SystemdCgroup \= false/SystemdCgroup \= true/g' /etc/containerd/config.toml # sandbox_image = "registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6" systemctl restart containerd.service systemctl enable containerd.service

引导集群 一些必要的工具(kubeadm、kubelet、kubectl、crictl) (所有机器) 1 2 3 4 5 6 7 8 9 10 11 12 13 wget https://github.com/kubernetes-sigs/cri-tools/releases/download/v1.25.0/crictl-v1.25.0-linux-amd64.tar.gz tar Cxzvf /usr/local/bin crictl-v1.25.0-linux-amd64.tar.gz # runtime-endpoint: unix:///run/containerd/containerd.sock image-endpoint: unix:///run/containerd/containerd.sock timeout: 10 debug: false pull-image-on-create: false disable-pull-on-run: false

1 2 3 4 5 6 7 8 9 10 11 12 13 14 apt install install -y apt-transport-https ca-certificates curl curl -fsSLo /usr/share/keyrings/kubernetes-archive-keyring.gpg https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg echo "deb [signed-by=/usr/share/keyrings/kubernetes-archive-keyring.gpg] http://mirrors.aliyun.com/kubernetes/apt kubernetes-xenial main" | sudo tee /etc/apt/sources.list.d/kubernetes.list apt update apt install kubeadm=1.25.3-00 apt install kubectl=1.25.3-00 apt install kubelet=1.25.3-00 # 冻结版本 apt-mark hold kubelet kubeadm kubectl

为master 安装keepalived并配置 实现高可用 (只有master节点) 1 apt install keepalived libul*

在每个master节点的 /etc/keepalived 下面新建 k8s.conf (内容相同但是 vrrp_instance.priority不同)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 global_defs { router_id LVS_DEVEL } vrrp_instance VI_1 { state BACKUP nopreempt interface ens32 virtual_router_id 80 priority 100 advert_int 1 authentication { auth_type PASS auth_pass just0kk } virtual_ipaddress { 172.24.10.201/24 } } virtual_server 172.24.10.201 6443 { delay_loop 6 lb_algo loadbalance lb_kind DR net_mask 255.255.255.0 persistence_timeout 0 protocol TCP real_server 172.24.10.105 6443 { weight 1 SSL_GET { url { path /healthz status_code 200 } connect_timeout 3 nb_get_retry 3 delay_before_retry 3 } } real_server 172.24.10.106 6443 { weight 1 SSL_GET { url { path /healthz status_code 200 } connect_timeout 3 nb_get_retry 3 delay_before_retry 3 } } real_server 172.24.10.107 6443 { weight 1 SSL_GET { url { path /healthz status_code 200 } connect_timeout 3 nb_get_retry 3 delay_before_retry 3 } } }

配置文件编辑好以后就可以启动了

1 systemctl enable keepalived && systemctl start keepalived && systemctl status keepalived

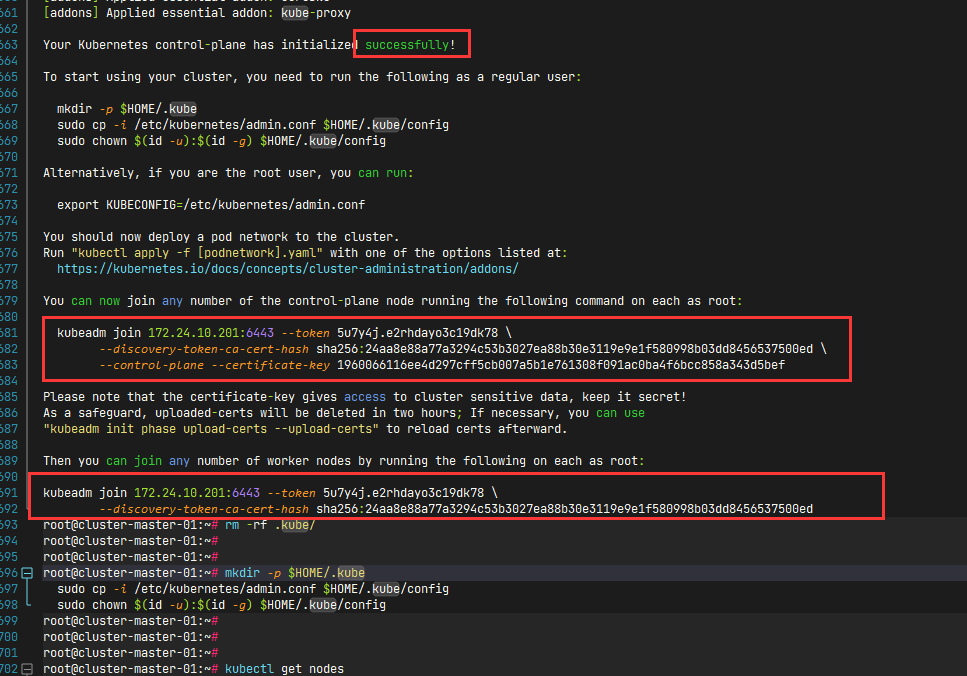

准备正式引导集群(master节点) 在一个master 节点执行

1 2 3 4 5 6 7 8 9 10 kubeadm init \ --apiserver-advertise-address 0.0.0.0 \ --apiserver-bind-port 6443 \ --control-plane-endpoint 172.24.10.201 \ --image-repository registry.cn-hangzhou.aliyuncs.com/google_containers \ --kubernetes-version v1.25.3 \ --pod-network-cidr 172.16.0.0/16 \ --service-cidr 10.221.0.0/16 \ --service-dns-domain k8s.xiaobai1202.com \ --upload-certs

执行完毕后 结果如下:

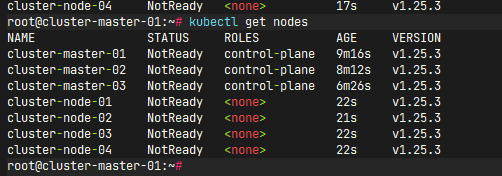

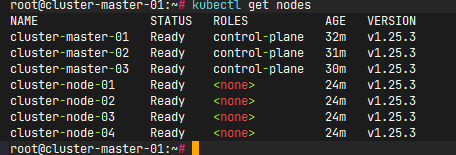

最后检查集群状态:

1 2 # 任意一个master 执行 kubectl get nodes

安装网络插件 使用calicohttps://raw.githubusercontent.com/projectcalico/calico/v3.24.4/manifests/tigera-operator.yaml

然后查看节点状态: